Explain This: CrewAI Vulnerability Chain and AI Agent Attack Surface

Four unpatched CVEs in CrewAI expose how AI agent frameworks become attack vectors through prompt injection and code execution chains.

Explain This: CrewAI Vulnerability Chain and AI Agent Attack Surface

AI agent frameworks promise autonomous task execution, but CrewAI's recent vulnerability chain (CERT/CC VU#221883) shows how these tools can become weaponized attack surfaces. Four unpatched CVEs allow attackers to chain prompt injection with code execution, turning helpful agents into hostile actors.

What Is CrewAI?

CrewAI is a Python framework for building multi-agent AI systems. Developers use it to create teams of specialized AI agents that collaborate on complex tasks - think automated research pipelines, content generation workflows, or data analysis chains. Each agent has tools, context, and autonomy.

The problem: that autonomy relies on parsing external inputs, executing code, and making decisions without human oversight. When those mechanisms aren't sandboxed properly, you get a vulnerability chain.

The Vulnerability Chain Explained

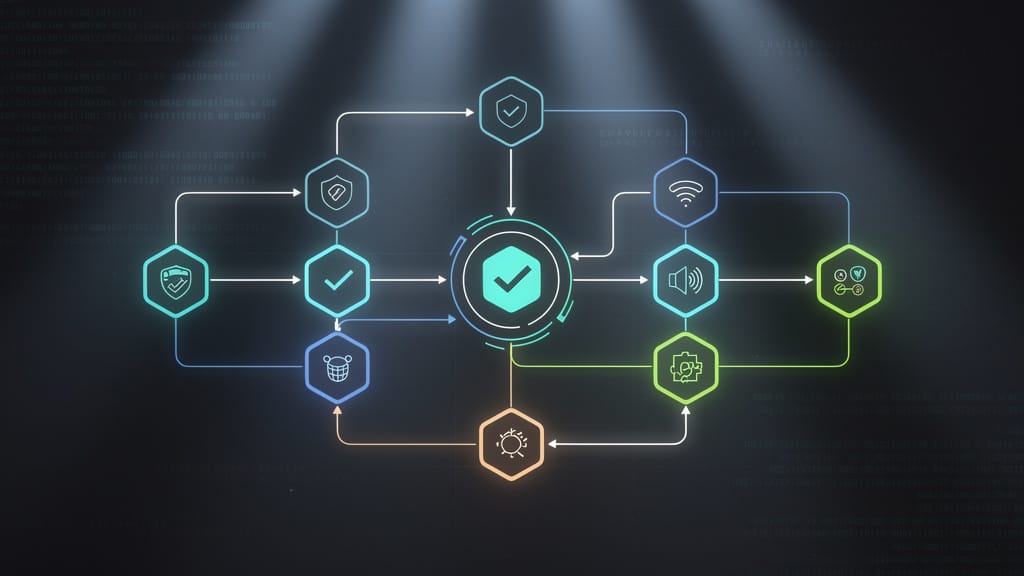

The CERT/CC advisory describes four CVEs working together:

- Prompt injection - Attackers embed malicious instructions in data the agent processes (CSV files, API responses, web scrapes). The agent treats hostile input as legitimate instructions.

- Tool misuse - CrewAI agents have access to tools (file operations, API calls, code execution). Injected prompts can invoke these tools with attacker-controlled parameters.

- Code execution - Some tools allow Python code execution for flexibility. Injected prompts can trigger arbitrary code through these interfaces.

- Privilege escalation - Agents often run with elevated permissions (API keys, database access, filesystem rights). Compromised agents inherit those privileges.

The chain works like this: hostile data → injected prompt → tool invocation → code execution → privilege abuse. Each step amplifies the attack.

Why This Matters for Legal Tech and Compliance Ops

If you're deploying AI agents for contract analysis, compliance monitoring, or legal research, this vulnerability pattern applies directly:

- Contract extraction agents - Process client-uploaded documents. A malicious clause formatted as a prompt could inject commands.

- Compliance monitoring agents - Scrape regulatory websites for updates. A compromised page could inject instructions to exfiltrate data.

- Legal research agents - Query case databases and summarize findings. Poisoned citations could redirect research or leak queries.

- Document automation agents - Generate legal documents from templates. Injected prompts could alter contract terms or embed backdoors.

The unifying risk: these agents operate autonomously on untrusted inputs without proper input validation or sandboxing.

Mitigation Steps (What to Do Now)

If you're running CrewAI or similar frameworks (LangChain, AutoGPT, etc.), take these steps immediately:

- Audit tool access - Review what tools your agents can invoke. Remove unnecessary privileges (file writes, code execution, network access).

- Sandbox code execution - If agents must run code, use isolated containers (Docker, Firecracker) with strict resource limits and no network access.

- Input validation - Treat all external data as hostile. Strip or escape special characters before passing to agents. Use structured formats (JSON schemas) instead of freeform text.

- Monitor agent behavior - Log all tool invocations, API calls, and file operations. Alert on anomalies (unexpected tools, unusual volumes, privilege escalation attempts).

- Version pinning - Don't auto-update AI frameworks. Test updates in staging first, review changelogs for security fixes.

- Implement guardrails - Use prompt filtering (detect injection patterns), rate limiting (prevent abuse loops), and human-in-the-loop approval for sensitive operations.

The core principle: assume your agents will be attacked through their inputs. Design your architecture accordingly.

The Broader Pattern

CrewAI isn't unique - this vulnerability pattern repeats across AI agent frameworks because they share the same design trade-off: autonomy requires trusting inputs and granting tool access. That creates attack surface.

Recent examples: - LangChain RCE via prompt injection (CVE-2026-33017) - AutoGPT arbitrary file write through tool misuse - GPT-4 plugins executing unvalidated code from API responses

The lesson: AI agents are powerful, but they're security-critical infrastructure. Treat them like any other privileged service - principle of least privilege, defense in depth, continuous monitoring.

If your organization is building or deploying AI agents, now is the time to audit your attack surface before threat actors do it for you.